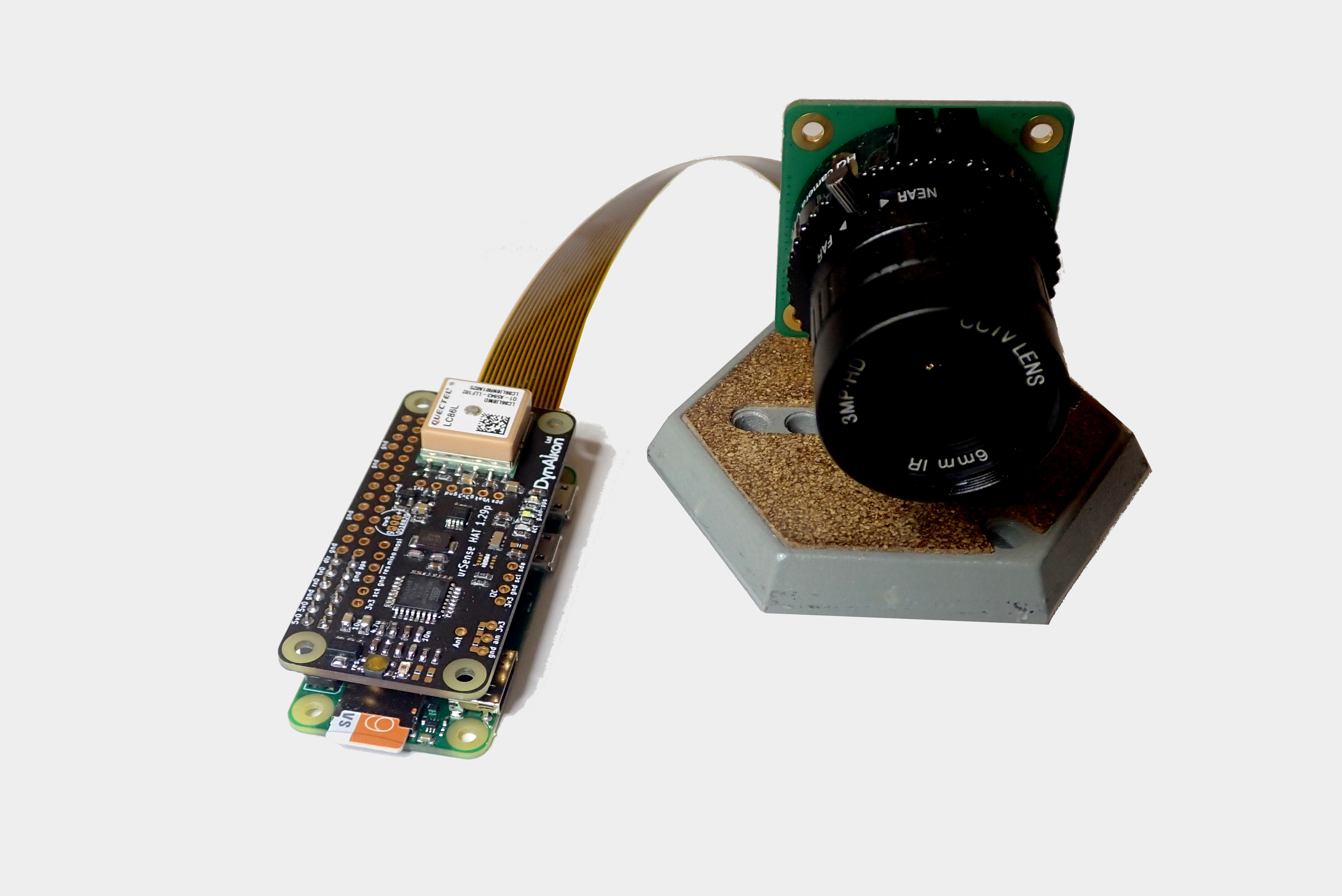

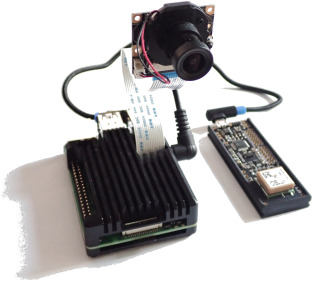

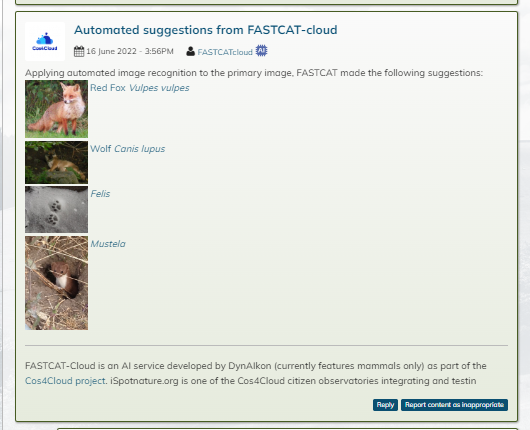

DynAIkon Trap listed under the inventory of Wildlabs

DynAIkon Trap listed under the inventory of Wildlabs . The Inventory is a dynamic, wiki-style discovery platform for conservation technology. It is the place to explore what technology is available for your work, how others worldwide use it, and what our conservation tech community recommends.

... Read more